A Simple Object Detection Example: A Chess Detector

- Melissa Montero

- May 14, 2023

- 8 min read

Updated: Aug 1, 2024

Artificial intelligence has numerous practical applications, including process automation in industries such as automotive assembly or fruit collection on farms. The core challenge in such applications lies in the detection and classification of various objects for subsequent manipulation. For example, in an automated engine assembly line, the ability to identify pistons, rings, and valves is necessary for robotic arms to select and arrange them correctly. Chess piece detection using a deep neural network provides a simplified toy example for experimentation that can generalize to more complex use cases such as automated assembly systems.

This article explores the various stages of a typical deep learning problem, using chess detection as a baseline problem. The successful detection of chess pieces is crucial for developing more sophisticated use cases, given that the same challenges are inherent in both scenarios.

Problem Description

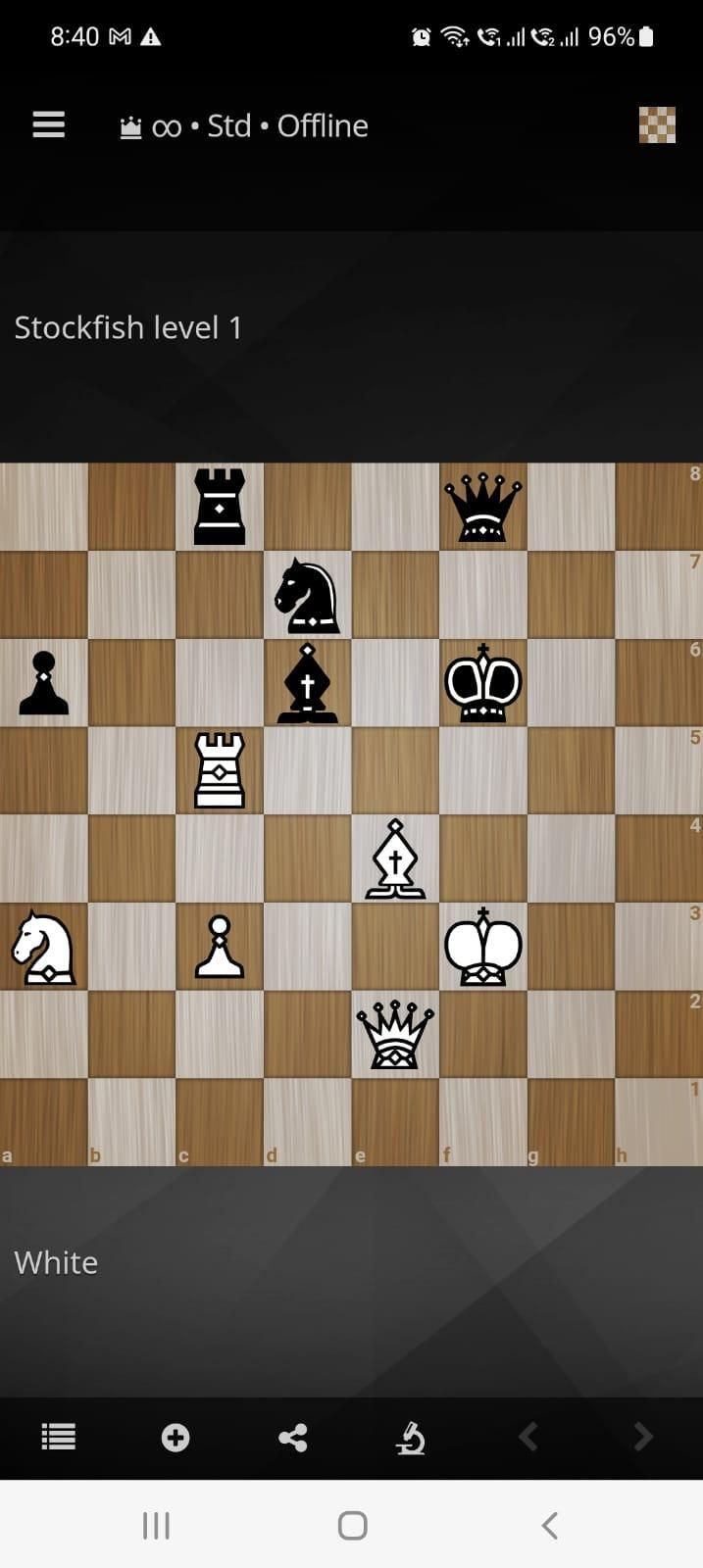

To begin with, it is essential to establish the problem that our network aims to solve, including the inputs and expected output. As illustrated in Figure 1, we consider a screenshot of a chess game played on a mobile device. The objective of this project is to develop a deep-learning pipeline that can process chessboard images similar to the one provided and automatically detect and classify each piece on the board. The output of the pipeline is an annotated image as shown in Figure 2, where each chess piece is enclosed within a bounding box indicating its type.

The reason behind feeding the pipeline with an uncropped image of the game is to simulate scenarios where the surrounding environment contains parts whose position can vary, and there is a need for detecting the working area.

Object Detection Processing Pipeline

In many deep learning applications, additional processing stages may be required to either adequate the data before the inference or post-process it and fine-tune the results. This pre- and post-processing do not necessarily involve a new deep learning process, but it can comprise some typical computer vision tasks and classical machine learning algorithms, among others.

The overall pipeline used in our example is depicted in Figure 3. The raw image from the game is fed to the system and processed by the chessboard detection algorithm, which is implemented using classical contour extraction algorithms. Once the board is extracted, the next stage involves the deep learning algorithm for detecting and classifying the chess pieces in the board. The output is a labeled version of the input image with corresponding bounding boxes and labels for each piece.

Dataset

The dataset is composed of 64 training images and 54 validation samples, all of them taken from different cellphone chess applications, varying the background theme and the piece's styles. Table 1 shows a list of the applications used for generating the dataset images:

Table 1. Mobile applications used for generating the dataset

Application Name |

Chess.com |

Lichess |

Chess24 |

Fide Online Arena |

In the case of the training images, they contain only 1 piece of every type per player, for a total of 12 pieces in the chessboard. All pieces are located in random positions. Figure 4 shows a sample training image.

The even distribution of piece representations within the dataset promotes balance and helps mitigate potential biases that may arise from the over-representation of common pieces, such as pawns. Additionally, a heatmap analysis helped to understand the most common locations of each piece and its distribution along the game board such as the one shown for the black knight in Figure 5.

In the case of the validation set, every image contains a chess game with a random number of pieces as shown in Figure 6.

Every piece in the data set is labeled with the corresponding bounding box, type, and player color. Note that we have an interest not only in recognizing the piece type but also in whether it is the black or white player.

Using different chess applications and themes allows the inclusion of a variety of pieces’ styles, leading to a better generalization of the detector. Figure 7 shows an example of different knight representations within the training dataset.

An interesting note regarding the dataset is its size. Given that transfer learning will be used for training the model, it is not required to keep a huge set of images to get a reasonable performance.

Results and discussion

Board Detection

The classic board detection algorithm works pretty well by thresholding the image and extracting the image contours, obtaining a high IOU (Intersection Over Union) with respect to the labeled boards. The results for all the datasets are shown in Table 2, the IOUs are mostly over 0.9.

Table 2. Board detection IOU for the training, validation, and test datasets (higher is better).

Dataset | Mean IOU | MIN IOU | MAX IOU |

Train | 0.988 | 0.948 | 1.0 |

Valid | 0.993 | 0.935 | 0.999 |

Test | 0.903 | 0.839 | 0.999 |

Some board detection examples were taken for each of the datasets, Figure 8 shows a sample of these images for the training dataset. Each figure contains the detection with the minimum and maximum IOU, plus a couple of random images. The detected board is highlighted in the figures with a blue square. After some analysis we noted that the minimum IOU is obtained when an extra border -generally above or below the board- is added to the detection. This failure is caused by low contrast between the board and the background or highlights in the background causing confusion in the contour detection.

Pieces Detection

With a small dataset of only 141 images, we decided to use transfer learning to train the YOLOv5 network. It is expected that transfer learning would allow us to get better results than training from scratch since we can take advantage of the shared domain. We initialized our model with the weights provided by Ultralytic’s YOLO repository, which were trained using the COCO object dataset. Specifically, we decided to test two different networks: YOLOv5s and YOLOv5x, corresponding to one of the smallest and the largest of the series, respectively. The objective was to determine if a small network would be sufficient for this problem and to see how much improvement can be gained with a larger model.

We started training using the default YOLOv5 hyperparameters, summarized in Table 3. As you can see, it includes a low augmentation that would help our dataset to have more variability.

Table 3. YOLOv5 default hyperparameters

Hyperparameter | Value |

Initial learning rate (lr0) | 0.01 |

Final learning rate (lrf) | 0.01 |

Momentum | 0.937 |

Weight decay | 0.0005 |

Warmup epochs | 3.0 |

Warmup momentum | 0.8 |

Warmup initial bias lr | 0.1 |

Box loss gain | 0.05 |

Classification loss gain | 0.5 |

Classification BCELoss positive_weight | 1.0 |

Objectness loss gain | 1.0 |

Objectness BCELoss positive_weight | 1.0 |

IoU training threshold | 0.20 |

Anchor-multiple threshold | 4.0 |

Focal loss gama | 0.0 |

Image HSV hue augmentation | 0.015 |

Image HSV saturation augmentation | 0.7 |

Image HSV value augmentation | 0.4 |

Image rotation | 0.0 |

Image translation | 0.1 |

Image scale | 0.5 |

Image shear | 0.0 |

Image perspective | 0.0 |

Image flip up-down(probability) | 0.0 |

Image flip left-right (probability) | 0.0 |

Image mosaic (probability) | 0.0 |

Image mixup (probability) | 0.5 |

Other parameters to take into consideration are the number of epochs, batch size, the amount of frozen layers, and the optimizer. Table 4 shows the values used for training:

Table 4. Mobile chess detection training parameters

Parameter | Values |

Number of epochs | 500 |

Batch size | 8 |

Freeze layers | 0.10 |

Optimizer | SGD |

YOLOv5s

Initially, we tested different values for the epochs, starting with 100, but noticed that higher epochs resulted in better performances, being around 300 where the losses started stabilizing. This can be seen in Figure 9. We noticed that 500 epochs were enough for training, but we selected the best model for inference detection that was not necessarily the last epoch model.

On the other hand, the model is composed of two parts: the backbone and the head layers. Since the backbone layers work as feature extractors and the head layers as output predictors, we decided to test freezing backbone network layers (avoid re-training them) to determine if it helped with the final results. The backbone consists of 10 layers, so we ran the experiments without freezing any layer and freezing the 10 layers of the backbone.

The selected optimizer was the YOLOv5 default Stochastic Gradient Descent (SGD) with a batch size of 8.

In Figure 10 you can see a comparison of the results between freezing and not freezing backbone layers for the YOLOv5s model. As you can see, the classifier loss takes more epochs to stabilize for the experiment that freezes the backbone, however, the final results are similar. Using the best model, the experiment without freeze obtained an F1 score of 0.987 for the validation and 0.809 for the test, while the case with backbone freeze obtained an F1 score of 0.966 for validation and 0.801 for the test. The main difference is a 0.02 in the F1 score for the validation dataset and both perform worse in the test dataset.

On the other hand, Figures 11 and 12 show the confusion matrix for validation and test datasets, respectively, for the experiment with no freezing. Both datasets accomplish a clear diagonal, meaning that most of the detections are correct, as we can expect with the F1 scores presented, the validation results have few outliers while the test results have more.

Taking a deeper look at the validation outliers we discovered that the detection is confusing the bishop piece with the knight pieces for the style shown in Figure 13. You can see that it doesn’t have the usual rounded shape and has a slot bigger than a usual bishop. It can be seen that, with some imagination, it can be confused with one of the knights that are looking up.

The model is also confusing the white king with the black king and the white pawn with the black pawn for the style shown in Figure 14, where the shapes have a thick black line.

On the other hand, inspecting the detection and confusion matrix for the test dataset, it is clear that it is confusing a lot more pieces. Specifically, the model is having trouble with the piece style shown in Figure 15. This figure shows the detections obtained from the inference using the best model of YOLOv5s without freezing. The bounding boxes indicate the piece's detection is correct, but the classification of the piece doesn’t make sense in most of the pieces. To understand what was going on with this style we created a couple of test images, using the same piece style but removing the gray circular background as shown in Figure 16. Our hypothesis was that the circular background could be confusing the network. Applying the inference to these images we got the results shown in Figure 16, where the pieces were detected correctly, except for the king which was confused with the rook.

Another common mistake in the test dataset results is to confuse a bishop with a pawn in piece styles like the one shown in 17.

Finally, Figure 18 shows some sample-labeled images from validation and test datasets, so you can have a qualitative sense of the chess detector's performance.

YOLOv5x

Figure 19 shows a comparison of the results between backbone freeze layers and no freezing layers for the YOLOv5x model. As you can see, the general behavior is pretty similar, obtaining a small improvement without freezing but at the cost of more memory usage and larger training times. The best model in this scenario obtained an F1 score of 0.96943 for validation and 0.83202 for testing when freezing the first 10 layers and 0.99258 for validation and 0.8609 for testing when all the layers were trained.

Figures 20 and 21 show the confusion matrix for testing and validation when the freeze is set to 0. As it is expected, we can see a clear diagonal in both with some outliers for the validation dataset and more outliers for the test dataset. The false positives are mostly caused by the same pieces exposed for YOLOv5s.

Going beyond digital!

The goal for every model training is to achieve enough generalization to react properly to unexpected scenarios. In order to stress our model and determine how well it performs in unexpected situations (in an empiric manner), we used a picture of a game from a chess book, as shown in Figure 22. The result of the detection is shown in Figure 23. It is possible to note that the model was able to detect and classify every piece in the chessboard correctly, demonstrating a good level of generalization.

What is Next?

Although we used a chess game as the subject of this project, it is worth mentioning that the same process and challenges can be extrapolated to more complex scenarios in the industry such as assembly lines, where the environment is not necessarily static and the location of the pieces can vary. We have demonstrated that using transfer learning and a model with a shared domain, allows us to speed up the training and achieve acceptable results in a short time, requiring fewer resources.

Tối qua mình đọc các bình luận trao đổi trên một diễn đàn, mình bắt gặp https://ea88site.com/ được chèn vào giữa câu chuyện. Mình bấm thử xem cho biết, chủ yếu là để xem cách trình bày và cấu trúc nội dung. Lướt nhanh thì thấy tổng thể khá gọn gàng, tạo cảm giác đáng tin cậy. Xem xong mình quay lại đọc tiếp các bình luận khác, chứ cũng không đào sâu thêm

I usually prefer concise introductions to entertainment platforms. The other day, I was browsing a few websites and saw many people discussing https://w88kia.jp.net/ , especially regarding online sports information. So, I was curious and checked out how they organized their content. I didn't delve into every detail, but spent some time looking at how the sections were divided and the interface.

This morning, while reading online comments, I noticed that đá gà thomo was being mentioned frequently. I clicked on it to see how it was presented and structured. A quick glance revealed a neat and trustworthy overall presentation. For me, such concise content is sufficient for me to grasp the basic information.

While reading the discussions, I noticed https://keobongdahomnay2.com/ being mentioned, so I decided to check it out. I only took a quick look at the overall picture and didn't delve into it in depth, but my initial impression is that the presentation is quite spacious, the layout is clear, and it's not overwhelming to look at.

Last night, while reading comments on a forum, I came across daga67 inserted in the middle of a conversation. I clicked on it to see what it was like, mainly to check the presentation and structure of the content. A quick glance showed that the overall presentation was quite neat and trustworthy. After that, I went back to read the other comments, without delving any deeper.